Universities across Europe are rethinking how research quality should be measured. A new proposal from researchers at Aalborg University argues that academic evaluation must move beyond simple publication metrics and instead recognize broader scientific contributions, openness, and societal impact.

Kathrine Bjerg Bennike and Poul Meier Melchiorsen, Aalborg Universitetsbibliotek

In 2022, the Coalition for Advancing Research Assessment (CoARA) introduced the Agreement on Reforming Research Assessment (ARRA), marking a shift in the European Union’s approach to evaluating research.[1]

ARRA represents a departure from a sole reliance on narrow quantitative indicators in research assessment. Instead, it promotes qualitative assessment methods and encourages recognition of a broader and more diverse range of research outputs.[2]

In December 2025, Aalborg University with partners hosted an official high-level EU-presidency conference on reforming research assessment.[3] The conference gathered stakeholders from across the globe to foster conversations on how to move from principle to practice.

The conference stressed the need for European and global coordination and alignment of mechanisms for evaluation. This includes collaboration between policymakers and representatives from scientific institutions and communities.

Secondly, open and collaborative science is key for sustainable solutions and innovative scientific outputs.

Finally, there is a need for investment in monitoring progress via metascience to ensure that new frameworks lead to positive change in assessment, and ultimately better science (Pedersen et al., 2026).

The conference provided examples of tools and frameworks for research assessment that are aligned with DORA and CoARA principles. One of these examples was the Aalborg University Research and Innovation Indicator (Stoustrup et al., 2023).

Aalborg University Research and Innovation Indicator

In 2022, Aalborg University introduced a new internal model for distributing performance-based research funding. Behind it lies a familiar tension in research policy: how to balance transparent metrics with the growing demand for more holistic and responsible assessment.

Aalborg University’s Strategic Council for Research and Innovation (SRFI) is responsible for the indicator, which was developed in coordination between all four faculties, the Finance Department, the Innovation Department and the University Library.

The task was clear, but far from simple: develop a framework that captures research quality across different disciplines, aligns with international standards, and remains manageable in day‑to‑day academic practice.

Two parts, two purposes

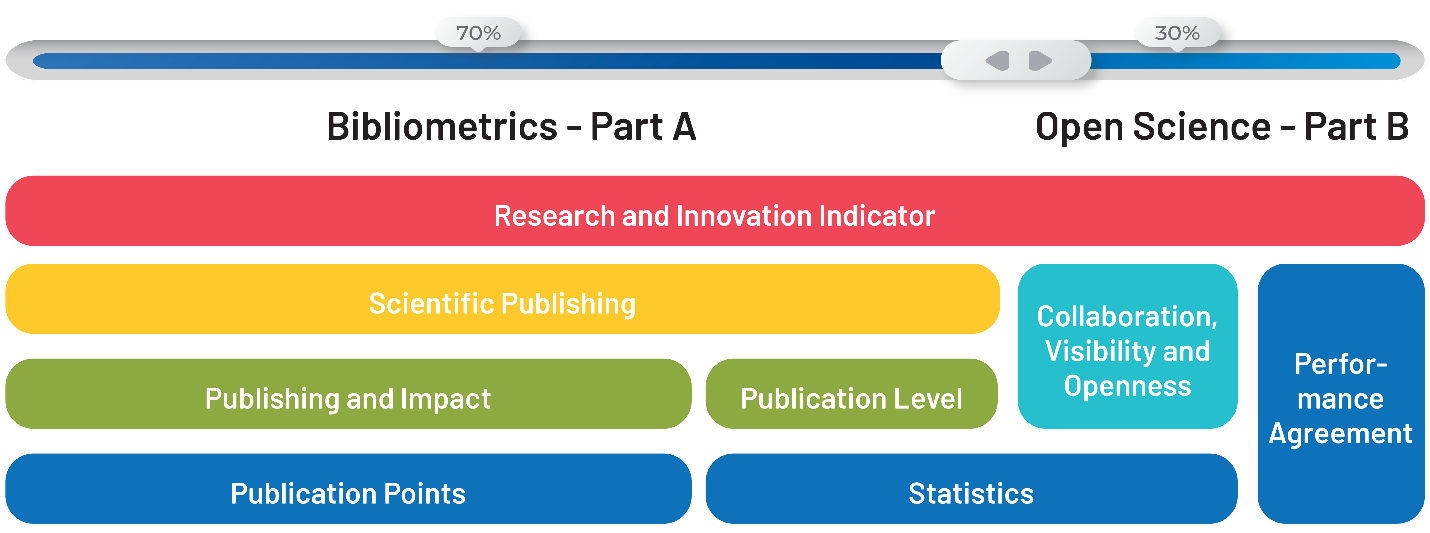

The result was a two-part indicator. Part A is bibliometric and combines publication counts with a weighted citation measure.

This part aligns to some extent with suggestions for further development of a previous national research indicator (Uddannelses- og Forskningsministeriet, 2019).

The aim is to recognize a wider range of scholarly outputs and to emphasize the actual impact of published work rather than reward journal prestige. Part A is subject-neutral and applied directly for internal allocation of basic research funding.

Part B is inspired by the Open Science agenda and international initiatives such as DORA and CoARA. Part B focuses on department-level strategies rather than individual performance.

Each department must define annual goals related to openness, visibility and collaboration formalized in departmental performance agreements with their faculty. The performance agreements reflect the diversity and different strengths of the departments.

Examples could be an SSH department with a focus on initiating dialogue on how their research areas contribute to “innovation and impact”, or establishing a national open access journal in a field lacking an appropriate outlet. Other examples could be setting targets for strengthening media visibility or identifying partnerships with industry or government agencies to generate societal impact.

Balancing Transparency: From Single Metrics to Wider Dialogue

Researchers have long been familiar with publication points, h‑indices[4] and journal impact factors as standard evaluation methods — simple indicators that condense performance into one or two numbers. By contrast, Open Science–inspired models introduce multiple dimensions, qualitative elements and a broader understanding of research contributions, which can increase the complexity.

The complexity, however, raises practical questions for implementation – for example, in terms of data collection, scope of the evaluation, comparability, and information overload.

With complexity, transparency becomes essential. For the Aalborg University indicator, transparency has been a key component, and the indicator is structured with that goal in mind.

Nonetheless, implementation has revealed different levels of transparency across departments. Traditions for management, evaluation and strategic planning vary, and some environments are more accustomed to discussing performance openly than others.

For the individual researcher, a broader assessment can lead to a fairer evaluation that accounts for differences within fields, research areas, and so on. However, if broader assessment translates into simply adding categories in quantity instead of approaching diversity in assessment, it may instead lead to increased pressure to perform on a larger range of indicators. Hence, merit structures need to align and resonate with the researchers’ reality of assessment, not only for external grants, but also in career development and job positions at research-performing institutions.

Moving forward in coordination

Several Danish public and private funding agencies have also worked towards including a CoARA-aligned approach in assessment (Pedersen et al., 2025). Some funding agencies have included narrative elements in their CV-templates or moved towards annotated publication lists describing the impact and relevance for a particular research topic rather than relying on one-sided bibliometrics such as the h-index (VELUX FONDEN & Villum Fonden, 2024).

Others have worked with their assessment committees to ensure that the changes are reflected in the selection of candidates (Independent Research Fund Denmark, 2025).

In Denmark, coordination and sharing of best practices has become easier with the establishment of a Danish National CoARA Chapter on December 3rd, 2025.

The chapter coordinates annual meetings where funders and research performing institutions can meet and exchange knowledge and practices, and coordinate efforts to ensure alignment and shared understandings. Initiatives such as the national chapter indicate a general willingness across sectors to reform the way research and researchers are assessed and further demonstrate a commitment to the alignment of values and principles for assessment.

In conclusion, the reform on research assessment is a chance to measure what we treasure – to reward team science, openness, collaboration with industry as well as traditional academic outputs. The Aalborg University Research and Innovation Indicator is still in its early stages, and the balance between clarity, fairness and usability will continue to evolve. But the framework reflects a broader shift in research policy: away from counting publications and towards a more nuanced understanding of what research and innovation contribute to — within disciplines, across the university and in society.

Noter

[1] https://bit.ly/4rXoZ83

[2][2] See also Declaration on Research Assessment (2012) https://bit.ly/4slh8kq, the Leiden Manifesto (2015) https://bit.ly/4rfMA2v, and the Hong Kong Principles (2019) https://bit.ly/3Nj0ZwY

[3] https://bit.ly/4b6eWGn

[4] The h-index (Hirsch index) is a bibliometric indicator that measures both the productivity and citation impact of a researcher’s publications (the editor).

References

Pedersen, D.B., Bennike, K.B. & Melchiorsen, P.M. (2026). From principle to practice in reforming research assessment. Policy Brief. Aalborg Universitet: Aalborg. https://doi.org/10.54337/aau.policybrief.euprra

Pedersen, D. B., Gensby, U., Hedegaard, J., Hansen, O. K., Theilgaard, J. B., & Bjerg Bennike, K. (2025). Emerging Practices in Research Assessment: Cross-Case Analysis of 10 International Funders. Aalborg Universitet. https://doi.org/10.54337/aau.epra2025

Stoustrup, J., Jensen, W., Kristensen, T. N., Larsen, B., Müller, C., Nielsen, T., Albretsen, J., Bjerg Bennike, K., Melchiorsen, P. M., Sivertsen, G., & Stehouwer Øgaard, L., (Trans.) (2023). AAU Forsknings og Innovationsindikator: Til fremme af AAU’s Videnskabelige Publicering og Impact, Samarbejde, Synlighed og Åbenhed. Aalborg Universitet. https://doi.org/10.54337/aau524581687

Uddannelses- og Forskningsministeriet. (2019). Fremtidssikring af forskningskvalitet: Ekspertudvalget for resultatbaseret fordeling af basismidler til forskning.

Independent Research Fund Denmark. (2025). Updated CoARA Action Plan. https://dff.dk/en/about-the-fund/publicationlist/2025/maj/updated-coara-action-plan/

VELUX FONDEN, & Villum Fonden. (2024). CoARA Action Plan 2024. https://villumfonden.dk/sites/default/files/paragraph/field_download/VELUX%20FONDEN%20and%20Villum%20Fonden%20CoARA%20Action%20Plan%202024%20final.pdf

DORA. 2012. “San Francisco Declaration on Research Assessment.” Declaration on Research Assessment. https://sfdora.org/read/

Coalition for Advancing Research Assessment. 2022. “Agreement on Reforming Research Assessment.” The European Commission.

https://www.coara.org/agreement/the-agreement-full-text/

Hicks, Diana, Paul Wouters, Ludo Waltman, Sarah de Rijcke, and Ismael Rafols. 2015. “Bibliometrics: The Leiden Manifesto for Research Metrics.” Nature 520 (7548): 429– 31. https://doi.org/10.1038/520429a

Moher D, Bouter L, Kleinert S, Glasziou P, Sham MH,Barbour V, et al. (2020) The Hong Kong Principles for assessing researchers: Fostering research integrity. PLoS Biol 18(7): e3000737. https://doi.org/10.1371/journal.pbio.3000737

Melchiorsen, P. M., & Bennike, K. B. (2024). Aalborg University Action Plan in the context of the CoARA Agreement. Zenodo. https://doi.org/10.5281/zenodo.10911186